The AI Enablement Brief · Mar 30, 2026

ChatGPT Ads: The Audience Problem

The early data looks bad. But everyone’s asking the wrong question.

ChatGPT ads are showing a 0.91% click-through rate.

One advertiser managed to spend only 3% of a $250,000 budget after several weeks because the platform couldn’t push enough volume.

The hot takes are already writing themselves: ads in LLMs don’t work, conversational interfaces aren’t ad surfaces, the format is fundamentally broken.

I’m not so sure. And I think most people are asking the wrong question.

The Audience Mismatch

Before we declare the format dead, it’s worth looking at who’s actually seeing these ads.

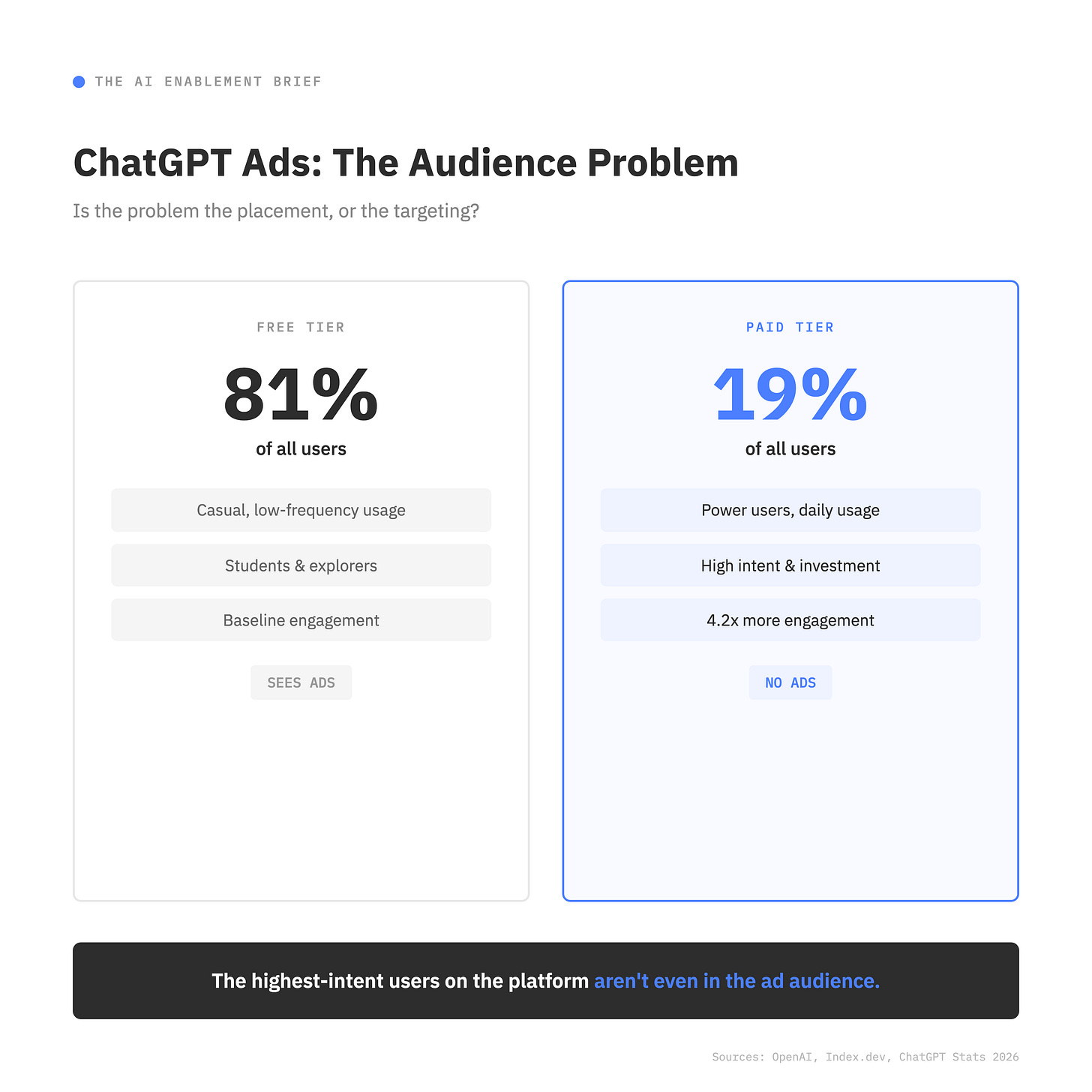

81% of ChatGPT’s users are on the free tier. Students, casual users, people checking in once or twice a week.

The paid tier — Plus, Pro, Business, Enterprise — represents 19% of the user base. Those paid users engage 4.2 times more per week than free users. They’re the power users, the daily operators, the ones with genuine commercial intent.

And they see zero ads. Ads are served exclusively to Free and Go tier users. Every paid tier is ad-free.

So when we look at a 0.91% CTR and say “the format doesn’t work,” we’re really saying “the format doesn’t work on casual, low-intent users who check in once a week.”

That’s a very different statement. The highest-intent users on the platform aren’t even in the audience.

Is the problem the placement, or the targeting?

Search Was Transactional. Chat Is Personal.

But the audience mismatch is only the surface-level question. The deeper one is about behavior.

LLMs aren’t search engines. When someone types a query into Google, there’s a clear intent signal — they’re looking for something specific, often with commercial context. “Best running shoes under $150” is an invitation for an ad. The user expects it. The format fits.

Chat is different. People tell ChatGPT things they’d never type into Google. Health questions they’re embarrassed to ask a doctor. Career anxiety they haven’t shared with their manager. Financial stress they’re working through. Relationship dynamics they’re trying to understand. The conversational interface creates a sense of intimacy that search never had.

That intimacy is what makes the behavioral question so important. The moment users realize their conversations are shaping the ads they see, the trust dynamic shifts in a way it never did with search. With Google, we always knew our searches informed our ads — and we accepted it because search felt transactional. You type a query, you get results, some of them are paid. The transaction is visible.

Chat doesn’t feel like a transaction. It feels like thinking out loud. And the psychological distance between “my search informed an ad” and “my private conversation informed an ad” is enormous. That behavioral shift — not the early CTR — is the thing worth watching.

The Volume Problem

There’s a second issue buried in the data that most commentary is glossing over. That advertiser who could only spend 3% of a $250K budget wasn’t just seeing low click-through rates — they couldn’t even get the impressions. The platform couldn’t push enough volume.

This tells you something important: ChatGPT’s ad inventory is still extremely limited. The free tier is large in user count but unpredictable in session behavior.

These aren’t users scrolling a feed with consistent, patternable engagement. They’re popping in with a question, getting an answer, and leaving. The ad surface is a conversation that might last thirty seconds or thirty minutes, and OpenAI is still figuring out where and when to insert a message without breaking the experience.

Compare that to Google’s ad machine, which had decades to optimize placement, timing, and targeting across billions of daily queries with clear intent signals. Judging ChatGPT’s ad model in its first few months against that benchmark is like judging Google Ads by its 2002 performance.

What to Watch

It’s way too early to call this. But there are a few things worth paying attention to as this evolves.

The free-to-paid conversion question. OpenAI converts free users to paid at a 5-6% rate. If ads degrade the free experience, that conversion rate drops — and that’s a far more expensive problem than low ad revenue. OpenAI knows this. It’s why they’re moving slowly.

The trust threshold. At what point does a user realize their conversation influenced an ad? And when that happens, does it change what they’re willing to share with the model? If users start self-censoring — keeping their real questions for the paid tier and giving the free tier only surface-level queries — the ad targeting actually gets worse, not better.

The format evolution. The current implementation is early and basic. The more interesting question is whether AI-native ad formats emerge — not banner-style interruptions, but contextually relevant suggestions woven into the conversation itself. That format doesn’t exist yet. When it does, the performance data will look completely different.

The competitive signal. AI-driven ad spend is projected to grow 63% in 2026, reaching $57 billion. The market is betting heavily that this works — eventually. The question is whether OpenAI figures out the format before advertisers lose patience.

Too Early to Call It

The 0.91% CTR is real. The volume problem is real. But declaring ads in LLMs broken based on three months of data from a casual, low-intent free tier audience is a premature diagnosis.

The placement makes sense. The audience might be wrong. And the behavioral side — how intimacy, trust, and self-censorship reshape the user experience when ads enter the conversation — is the story that hasn’t been written yet.

I’m going to sit and watch how this evolves.